Upgrade Your Workflow, Part 1: Building OSINT Checklists

With so many new cool techniques and tools being released every day, I’ve caught myself going down rabbit holes or chasing false leads during engagements. Sometimes I’ll get so bogged down with tunnel-vision that I make OpSec mistakes or delay an entire testing objective. At best, this could result in my attacks being discovered, resulting in wasted time, or at worst, a vulnerability may go unnoticed. In conversations with other Red Team practitioners, we decided that using checklists would be a solid solution.

This blog post will be part one (1) of a series of different checklists I personally use on engagements. While the tools and methods you use may be different, the overall goal to avoid mistakes and ensure coverage should be immutable.

The first checklist I’d like to cover is OSINT. When obtaining information on a target, I generally find there are two (2) camps: cast a wide net for information on an organization and its employees or take a deep dive on a single person. This post will cover the former.

Disclaimer: The ideas/methods here are the culmination of various sources; it is not intended to be a be-all, end-all solution. It’s just what works for me.

OSINT OPSEC:

The first step in starting an engagement is picking a pre-built persona under which I’ll be operating. Generally speaking, I’ll use something like https://www.fakepersongenerator.com/ to create a persona and register for various services like Google (Gmail), Facebook, LinkedIn, etc. This account sometimes is reused between engagements to build an online presence.

The next step is to set up a Virtual Private Server (VPS) from which to execute my tools. There can be some advantages in which provider you choose from, as some IP pools used by VPSs have been blacklisted, I personally use Amazon Web Services (AWS). In addition to running tools from the VPS, I also like to install OpenVPN, so if I find a tool/script that isn’t easily ported to the OS used on the VPS, I can just route it from a dedicated testing VM.

Below is a basic set of tools I have installed on my OSINT VMs from the get-go. What you use may be different, but creating a list can be helpful if you eventually desire to automate this process. ????

| Tools | Source |

| Go | https://golang.org/ |

| Amass | https://github.com/OWASP/Amass |

| SubJack | https://github.com/haccer/subjack |

| OpenVPN | https://openvpn.net/ |

| Domreg-enum.sh | https://github.com/ninewires/scripts |

Note: The people at https://hackplanet.io have a great set of videos (OSINT part 1 and OSINT Part 2) covering additional OPSEC steps you can take with your browser as well as tools you can use during your reconnaissance. I highly recommend you check them out.

Collect Domain Info:

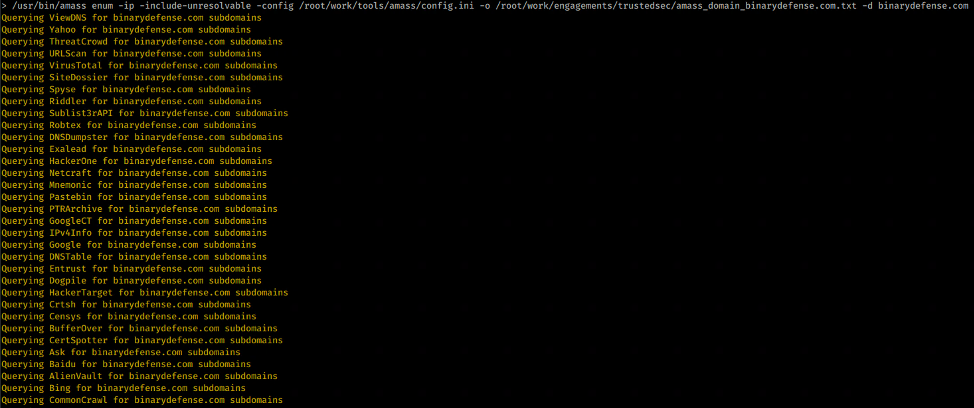

The next step is the passive collection of domain information. There are lots of tools that can do this, but I prefer a combination of OWASP’s Amass and Rapid7’s Project Sonar. Amass is great as it combines various sources and is actively maintained. While its very useful out of the box, you can extend it even further by supplying a config file provisioned with API keys to various services. Many of these services offer limited access to their API, as long as it’s not abused.

| API Service | Cost | Rate |

| AlienVault | free | A given client is limited to 100 GET and 50 POST requests per second for a USM Anywhere or USM Central subdomain. |

| BinaryEdge | Free | 250 requests per month |

| Censys | Free | 250 queries per month and 1,000 results per query |

| CIRCL | Unknown | Unknown |

| DNSDB | Free | 30-day trial, 100 queries/day |

| GitHub | Free | 30 requests per minute |

| NetworksDB | free | 1,000 per month |

| PassiveTotal | Free | 15 per day |

| SecurityTrails | Free | 50 per month |

| Shodan | Free | 100 per result |

| Spyse | Free | 6,000 results per query |

| Free, but reviewed | 250 per month for last 7 days | |

| Umbrella | Not Free | Unknown |

| URLScan | Free | 1 per 2 seconds |

| VirusTotal | Free | 4 per minute |

| WhoisXML | Free | 30 per second |

For Amass, I like running with the following switches:

| Switch | Action |

| -ip | Lists the IP if resolved |

| -include-unresolveable | Lists names even if not resolved |

| -config | Path to file where we have our API keys |

| -o | Out file where data is saved |

Rapid7’s Project Sonar is a LARGE collection of DNS records that can be found at https://opendata.rapid7.com/sonar.fdns_v2/

I like pulling both the A and CNAME records—the others are also good if you have the time/diskspace. Once downloaded, I prefer to run the following command to extract all of the discovered names:

zgrep '\.binarydefense\.com' 2020*.json.gz | cut -d ',' -f 2 | cut -d ':' -f 2 | cut -d '"' -f 2 | sort -u > sonar_domains_binarydefense.com.txt

In addition to standard domain information, I like to pull domains that are registered using an email address related to the client. Domreg-enum.sh is similar to cert.sh’s checks, but instead of looking at certificates that match our domain, we’re looking at DNS records registered with an email address that matches our target domain.

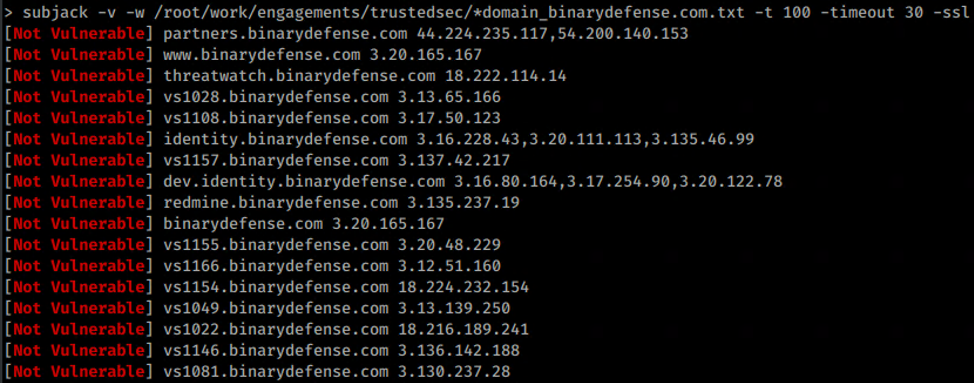

Lastly with all of the domains, I like to run a quick check for subdomain hijacking. These domains are great for phishing and command and control (C2), if you’re lucky.

Collect User Information:

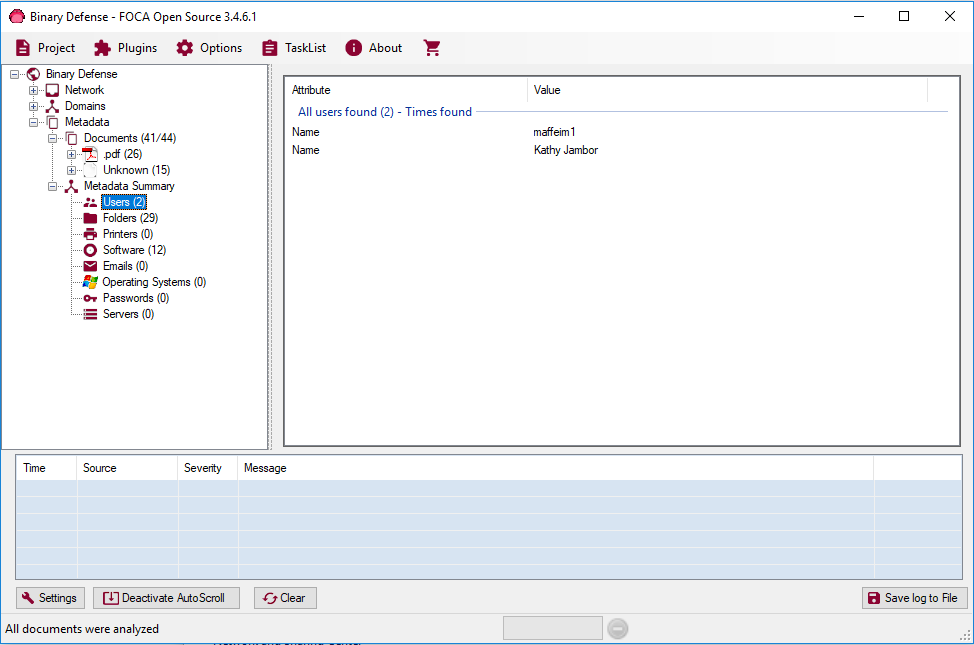

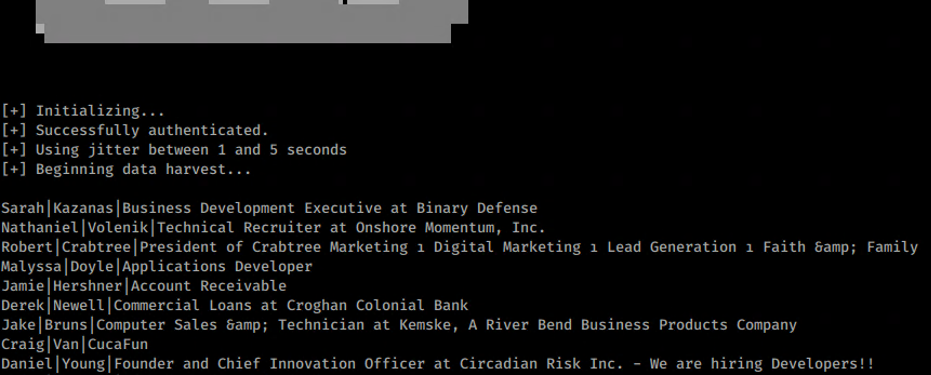

Next, we move onto user account enumeration. For this, I rely on several sources: previous data breach data, metadata from FOCA, and LinkedIn scraping. For breach data, if you do some creative Googling you can find torrents to the Collections 1-5 breaches that can get you started.

The goal of this obtaining user information could be for performing password spraying, sending phishes, or identifying key individuals such as IT administrators for future investigation.

Collect Email Information:

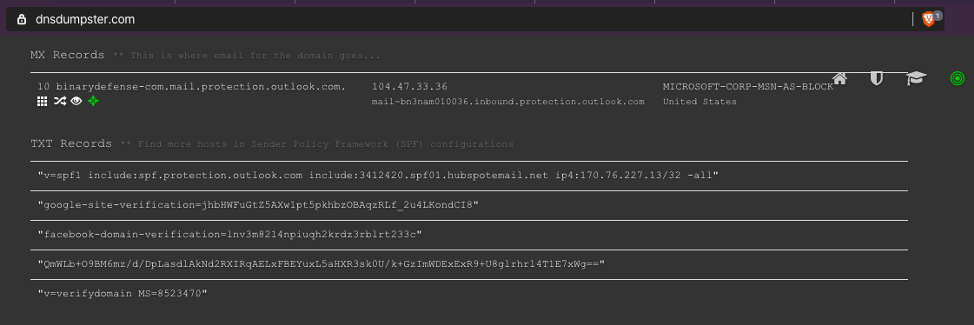

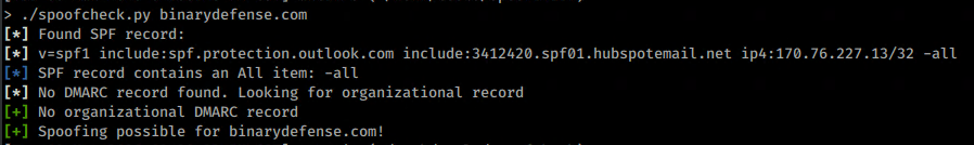

Next, I check the MX records to see what mail provider the client could be using. Proofpoint? Office 365? Internally hosted?

Collect Used Technologies:

Using FOCA, LinkedIn, and job postings, I like to look through and identify any indicators of what endpoint detection and response (EDR), anti-virus, Security Information and Event Management systems (SIEMs), etc. that I might encounter.

Conclusion

As mentioned, following are the items in my checklist of OSINT activities to perform at the beginning of an engagement. What’s important is to continuously add to it when needed.

The OSINT Checklist:

OSINT OPSEC

☐ Setup VPS

☐ Install Tools

☐ Setup VPN

Domain Collection

☐ Setup API’s

☐ Amass

☐ Rapid 7 Sonar

User Collection

☐ Breach Data

☐ FOCA

☐ LinkedIn

☐ Website Directory Information

Email Collection

☐ DNS

Technology Collection

☐ Linked In

☐ Job Searches