Working with data in JSON format

What is JSON?

What is JSON? JSON is an acronym for JavaScript Object Notation. For years it has been in use as a common serialization format for APIs across the web. It also has gained favor as a format for logging (particularly for use in structured logging). Now, it has become even more common for command line applications to use JSON to serialize general output.

JSON can be used to serialize data into common object and value types. These include key-value pairs, arrays, strings, numbers, Boolean values, and null. However, it is not without its limitations. The first limitation has drawbacks in the form of parsing. Because of how JSON is structured, an entire JSON object must be loaded completely in order to parse it. In most cases, this means the entire output of a command line or web API must be obtained before processing. The second limitation of JSON is the restriction to the types above (key value, list, true, false, null, number, and string). This leads to some lossy conversion during serialization. For example, if you need to represent a date and time, that information must be converted to a string. There are many ways to address these limitations, but that is beyond the scope of this post.

Examples

Here are a few examples of where you might encounter JSON-formatted data:

- MITRE ATT&CK stix data

- Output from certipy

- Output from CrackMapExec spider (plus)

- Google IP address ranges

- Microsoft Office 365 IP address ranges

Tooling

The following is a breakdown of some tooling that may help when working with JSON-formatted data:

gron

The tool gron flattens JSON into keys and values. It can also take the flattened version and reconstitute it into JSON. The keys are complex and encode the absolute path to the value. This makes it easier to work on the data with other tools that are targeted toward line-delimited data, such as grep, sed, awk, Bash, etc.

Available for most platforms, gron is written in Go, which provides a high degree of portability. Installation is straightforward with pre-compiled binaries available for Windows, Linux, macOS, and FreeBSD. It may also be available via your favorite package manager. If none of the above cover your platform, you may also use Go to install it.

Here is a brief example showing how to grep for email addresses within a nested JSON object:

<strong>$ </strong>wget -qO - 'https://jsonplaceholder.typicode.com/users' | gron | grep -F '.email' json[0].email = "[email protected]"; json[1].email = "[email protected]"; json[2].email = "[email protected]"; json[3].email = "[email protected]"; json[4].email = "[email protected]"; json[5].email = "[email protected]"; json[6].email = "[email protected]"; json[7].email = "[email protected]"; json[8].email = "[email protected]"; json[9].email = "[email protected]";

jless

The tool jless allows interactive viewing of JSON-formatted data in a text user interface. It applies syntax highlighting and allows you to navigate through a complex, nested JSON object and search with regex. This makes it easy to explore data in JSON format, especially if it is minified. It will even allow you to copy a jq style path, which is useful when trying to create and debug jq filters. This is done with the keybinding yq, which is one of many available keybindings.

Installing jless can be done via your favorite package manager. There are pre-compiled binaries available for macOS and Linux. And jless is written in Rust, so installing via Cargo is also an option for platforms that do not have a package manager providing it, and which do not have pre-compiled binaries for them.

Here is a brief example showing jless, searching for 123, and copying the jq path to the clipboard:

dataclasses-json

The Python package dataclasses-json facilitates parsing JSON-formatted data into simple classes that are easy to work with in Python. Under the hood, it uses the Python package marshmallow to provide deserialization and parsing beyond the basics of Python's built-in JSON module. This makes it an excellent tool to keep in your kit for working with more complex data in JSON format. Installing dataclasses-json is done with pip (or another Python package manager such as pipenv or poetry).

Here is a brief example showing how to use dataclasses-json to load data into a dataclass:

<strong>import</strong> <strong>sys</strong>

<strong>import</strong> <strong>fileinput</strong>

<strong>from</strong> <strong>typing</strong> <strong>import</strong> List

<strong>from</strong> <strong>dataclasses</strong> <strong>import</strong> field

<strong>from</strong> <strong>dataclasses</strong> <strong>import</strong> dataclass

<strong>from</strong> <strong>dataclasses_json</strong> <strong>import</strong> LetterCase

<strong>from</strong> <strong>dataclasses_json</strong> <strong>import</strong> DataClassJsonMixin

<strong>from</strong> <strong>dataclasses_json</strong> <strong>import</strong> config

@dataclass(frozen=<strong>True</strong>)

<strong>class</strong> <strong>Company</strong>(DataClassJsonMixin):

bs :str

catch_phrase :str = field(metadata=config(letter_case=LetterCase.CAMEL))

name :str

@dataclass(frozen=<strong>True</strong>)

<strong>class</strong> <strong>GeographicCoordinates</strong>(DataClassJsonMixin):

latitude :str = field(metadata=config(field_name="lat"))

longitude :str = field(metadata=config(field_name="lng"))

@dataclass(frozen=<strong>True</strong>)

<strong>class</strong> <strong>Address</strong>(DataClassJsonMixin):

street :str

suite :str

city :str

zipcode :str

geo :GeographicCoordinates

@dataclass(frozen=<strong>True</strong>)

<strong>class</strong> <strong>Person</strong>(DataClassJsonMixin):

index :int = field(metadata=config(field_name="id"))

name :str

phone :str

username :str

email :str

website :str

company :Company

address :Address

<strong>def</strong> cli(*args :List[str]) -> int:

json_input = '<strong>\n</strong>'.join(list(fileinput.input(encoding="utf-8")))

print(

*map(

<strong>lambda</strong> p: f'<strong>{</strong>p.index<strong>:</strong>>3<strong>}</strong> <strong>{</strong>p.name<strong>:</strong><25<strong>}</strong> <strong>{</strong>p.email<strong>}</strong>',

Person.schema().loads(json_input, many=<strong>True</strong>)

),

sep='<strong>\n</strong>',

end='<strong>\n\n</strong>'

)

<strong>return</strong> 0

<strong>if</strong> ('__main__' == __name__):

sys.exit(cli(sys.argv))JSON diff and patch

The command line tool (and Go library) jd can be used to diff and patch data in JSON format. If you have two similar files with JSON-formatted data and wish to isolate the differences, jd is the perfect tool for the job. It can be useful when you want to replace something nested deep within some JSON-formatted data.

Installation may be a little more complex than other tools listed here. It is available via the brew package manager for macOS or via the Go package manager. It can also be executed as a docker container. And finally, it is available on the web.

Here is a brief example showing what a diff from jd looks like:

<strong>$ </strong>cat jd-first.json

[

{ "id": 1, "text": "buy milk", "done": false },

{ "id": 1, "text": "learn italian", "done": false },

{ "id": 1, "text": "clean oven", "done": false }

]

<strong>$ </strong>cat jd-final.json

[

{ "id": 1, "text": "buy milk", "done": true },

{ "id": 1, "text": "learn italian", "done": false },

{ "id": 1, "text": "clean oven", "done": false },

{ "id": 1, "text": "make pickles", "done": false }

]

<strong>$ </strong>jd jd-first.json jd-final.json

@ [0,"done"]

- false

+ true

@ [-1]

+ {"done":false,"id":1,"text":"make pickles"}jq

The tool jq supports complex filtering and transforming of data in JSON format. It has a huge feature set and is able to cut through a large file of JSON-formatted data and mutate it into another layout.

Installation is very straightforward because jq is a single binary with no dependencies. Pre-compiled binaries are available from the website. It is also available via many package managers.

If you do not want to install it, there is also an online version available.

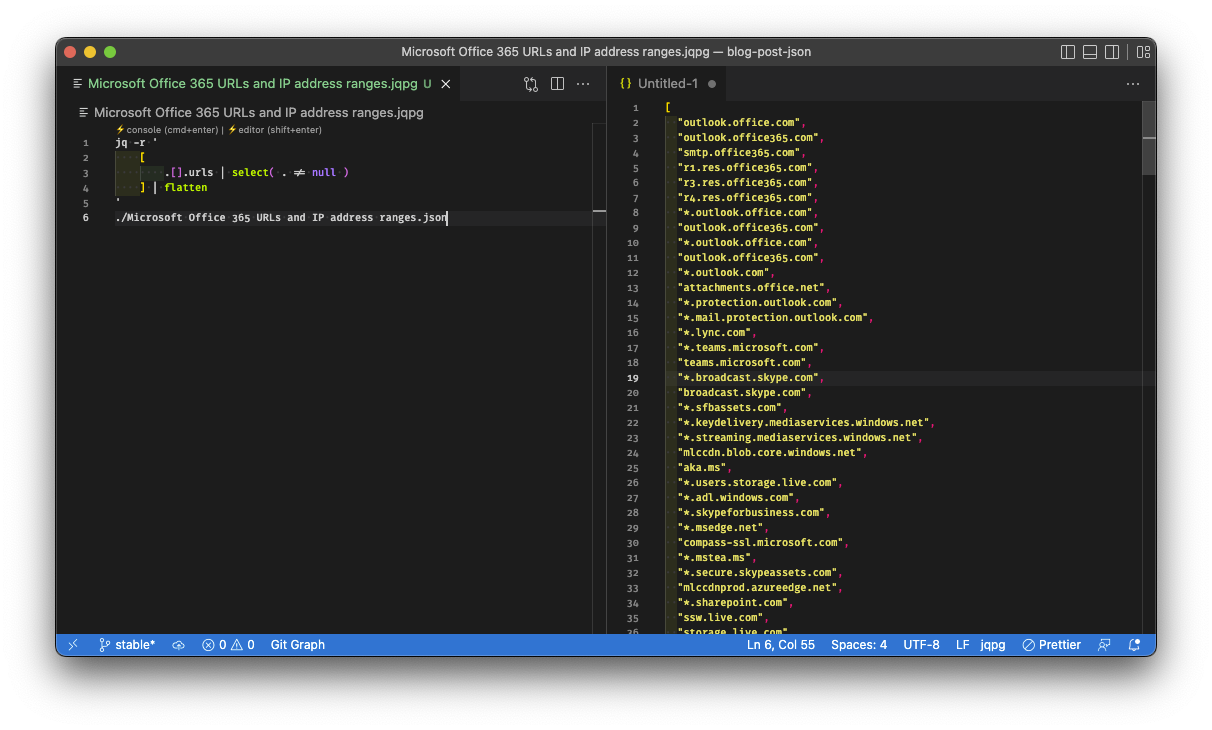

Bonus

The complexity of jq can make working on a filter a steep climb. To help iterate on a filter, it can be helpful to have a plugin for your editor. Visual Studio Code jq playground is a great example. It allows you to work on one or more filters, rendering the output as you go.

Below is a brief example showing what can be done with jq (The screenshot is of Visual Studio Code, but running jq from the command line is not any different.):

jq (Python package)

The Python package jq provides bindings to the jq command line tool above. It allows you to use the best of both jq and Python to construct more complex processing of data in JSON format. Installing the jq package is done with pip (or another Python package manager like pipenv or poetry).

An example of using this package can be found below.

Practical Examples

Filtering for scopes in Google IP Address ranges

This example will use the Google IP address ranges data set.

The data set provides a nested array of JSON objects. We will use jq to filter and flatten it into a single array of IP v4 prefixes.

<strong>$ </strong>wget -qO - 'https://www.gstatic.com/ipranges/goog.json' | jq '[ .prefixes[] | .ipv4Prefix | select( . != null ) ] | unique' [ "104.154.0.0/15", "104.196.0.0/14", "104.237.160.0/19", "107.167.160.0/19", "107.178.192.0/18", "108.170.192.0/18", "108.177.0.0/17", "108.59.80.0/20", "130.211.0.0/16", "136.112.0.0/12", "142.250.0.0/15", "146.148.0.0/17", "162.216.148.0/22", "162.222.176.0/21", "172.110.32.0/21", "172.217.0.0/16", "172.253.0.0/16", "173.194.0.0/16", "173.255.112.0/20", "192.158.28.0/22", "192.178.0.0/15", "193.186.4.0/24", "199.192.112.0/22", "199.223.232.0/21", "199.36.154.0/23", "199.36.156.0/24", "207.223.160.0/20", "208.117.224.0/19", "208.65.152.0/22", "208.68.108.0/22", "208.81.188.0/22", "209.85.128.0/17", "216.239.32.0/19", "216.58.192.0/19", "216.73.80.0/20", "23.236.48.0/20", "23.251.128.0/19", "34.0.0.0/15", "34.128.0.0/10", "34.16.0.0/12", "34.2.0.0/16", "34.3.0.0/23", "34.3.128.0/17", "34.3.16.0/20", "34.3.3.0/24", "34.3.32.0/19", "34.3.4.0/24", "34.3.64.0/18", "34.3.8.0/21", "34.32.0.0/11", "34.4.0.0/14", "34.64.0.0/10", "34.8.0.0/13", "35.184.0.0/13", "35.192.0.0/14", "35.196.0.0/15", "35.198.0.0/16", "35.199.0.0/17", "35.199.128.0/18", "35.200.0.0/13", "35.208.0.0/12", "35.224.0.0/12", "35.240.0.0/13", "64.15.112.0/20", "64.233.160.0/19", "66.102.0.0/20", "66.22.228.0/23", "66.249.64.0/19", "70.32.128.0/19", "72.14.192.0/18", "74.114.24.0/21", "74.125.0.0/16", "8.34.208.0/20", "8.35.192.0/20", "8.8.4.0/24", "8.8.8.0/24" ]

Now, to use this for something more—Say you wanted to block all of these ranges—To do so, you could change the query slightly to produce a line-delimited list that can be passed to xargs and then on to iptables.

<strong>$ </strong>wget -qO - 'https://www.gstatic.com/ipranges/goog.json' | jq '[ .prefixes[] | .ipv4Prefix | select( . != null ) ] | unique | join("\n")' | xargs -L1 iptables -I INPUT -s "{}" -j ACCEPTExplanation

- wget downloads the file, set to quiet mode with -q (meaning it does not output info other than the file), and the output -O is set to standard out with the - character.

- jq receives the piped output and—

- creates a new array by:

- filtering for the

prefixesmember as an array - filtering for the

ipv4Prefixmember of the item from the array - filtering for values that are not

null

- filtering for the

- filters the new array to ensure each entry in it is unique

- joins the new array with newlines to output the new array's items one per line

- creates a new array by:

- xargs receives the piped output, then calls iptables for each line, adding allow rules to the beginning of the input chain.

Back-correlating IP addresses from firewall logs to their Microsoft Office 365 domain

In this example, we will use the Microsoft Office 365 IP address ranges data set, a fake access log file, and a Python script.

The data provides a list of objects that describe the various service areas and their URLs and IP address ranges (in CIDR form). For this example, we will use the jq binding package and a Python script.

<em>#!/usr/bin/env python3</em>

<strong>import</strong> <strong>sys</strong>

<strong>import</strong> <strong>json</strong>

<strong>import</strong> <strong>fileinput</strong>

<strong>import</strong> <strong>ipaddress</strong>

<strong>from</strong> <strong>typing</strong> <strong>import</strong> List

<strong>from</strong> <strong>pathlib</strong> <strong>import</strong> Path

<strong>from</strong> <strong>functools</strong> <strong>import</strong> reduce

<strong>from</strong> <strong>functools</strong> <strong>import</strong> partial

<strong>import</strong> <strong>jq</strong>

<strong>def</strong> search_for_address(lookup_table, result_dict, line):

<em># take the address from the front of the line</em>

address = ipaddress.IPv4Address(line.split(' ', 1)[0])

result_found = <strong>False</strong>

<strong>for</strong> name,list_of_networks <strong>in</strong> lookup_table.items():

<strong>if</strong> result_found:

<strong>break</strong>

<strong>for</strong> network_str <strong>in</strong> list_of_networks:

<strong>if</strong> (address <strong>not</strong> <strong>in</strong> ipaddress.IPv4Network(network_str)):

<em># address is not one of interest for this range, continue on</em>

<strong>continue</strong>

<strong>else</strong>:

<em># add or update dict entry with the line</em>

value = result_dict.get(name, [])

value.append(line.rstrip())

result_dict.update({name: value})

<em># set flag to true in order to break out of the outter loop</em>

result_found = <strong>True</strong>

<strong>break</strong>

<strong>return</strong> result_dict

<strong>def</strong> cli(*args :List[str]) -> int:

<strong>try</strong>:

filter_text = '''<strong>\

</strong> .[] | select( .ips != null ) | {

(.serviceAreaDisplayName): .ips | map(select(contains(":") | not))

}

'''

<em># read in and remove carriage returns</em>

filter_input = json.loads(''.join(list(

map(

<strong>lambda</strong> x: x.rstrip(),

fileinput.input(encoding="utf-8")

)

)))

<em># run jq filter against the input</em>

filter_output = jq.compile(filter_text).input(filter_input).all()

names_to_address_ranges = dict()

<em># flatten the jq filter output (list of dict into a single dict with a combined list of unique networks)</em>

<strong>for</strong> x <strong>in</strong> filter_output:

<strong>for</strong> name,v <strong>in</strong> x.items():

names_to_address_ranges.update({

name: list(set(names_to_address_ranges.get(name, []) + v))

})

access_log = (Path() / 'access.log').resolve()

<strong>with</strong> access_log.open(mode='rt', encoding='utf-8') <strong>as</strong> handle:

<em># call search_for_address against every line, combining the result into a single dict</em>

result = reduce(partial(search_for_address, names_to_address_ranges), handle, dict())

<strong>except</strong> <strong>Exception</strong> <strong>as</strong> ex:

print(ex, file=sys.stderr)

<strong>return</strong> 1

<strong>else</strong>:

<strong>for</strong> k,v <strong>in</strong> result.items():

print(k, "=" * len(k), *v, sep='<strong>\n</strong>', end='<strong>\n\n</strong>')

<strong>return</strong> 0

<strong>if</strong> ('__main__' == __name__):

sys.exit(cli(*sys.argv))<strong>$ </strong>wget -qO - 'https://endpoints.office.com/endpoints/worldwide?clientrequestid=b10c5ed1-bad1-445f-b386-b919946339a7' | python -u correlate.py Exchange Online =============== 52.103.78.154 - - [24/Aug/2022:00:00:00 ] "GET /index.html HTTP/1.1" 302 706 "-" "Mozilla/5.0 (Windows NT 6.2; Win64; x64; rv:16.0.1) Gecko/20121011 Firefox/16.0.1" 52.101.250.115 - - [24/Aug/2022:00:00:18 ] "TRACE /eligendi/voluptate/asperiores/in/quis/perferendis/pariatur HTTP/1.1" 500 538 "-" "Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.2 (KHTML, like Gecko) Chrome/22.0.1216.0 Safari/537.2" 40.93.149.94 - - [24/Aug/2022:00:03:03 ] "PUT /index.html HTTP/1.1" 500 708 "-" "Mozilla/5.0 (X11; CrOS i686 2268.111.0) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.57 Safari/536.11" 52.101.166.116 - - [24/Aug/2022:00:05:51 ] "DELETE /velit/dolores/at/aperiam/quaerat/quibusdam/enim HTTP/1.1" 500 445 "-" "Mozilla/5.0 (compatible; MSIE 10.0; Macintosh; Intel Mac OS X 10_7_3; Trident/6.0)" 104.47.111.206 - - [24/Aug/2022:00:11:05 ] "DELETE /dolore/adipisci/minus/laudantium/veritatis/et/repudiandae/qui HTTP/1.1" 500 191 "-" "Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US);" 40.107.183.115 - - [24/Aug/2022:00:18:16 ] "DELETE /amet/et/alias/quod/numquam/libero/nihil HTTP/1.1" 200 177 "-" "Mozilla/5.0 (Windows NT 6.2; Win64; x64; rv:16.0.1) Gecko/20121011 Firefox/16.0.1" 40.92.113.173 - - [24/Aug/2022:00:23:04 ] "OPTIONS /amet/et/alias/quod/numquam/libero/nihil HTTP/1.1" 200 76 "-" "Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US);" 40.93.27.4 - - [24/Aug/2022:00:23:12 ] "POST /in/ipsum/possimus/voluptate/consequatur HTTP/1.1" 202 304 "-" "Mozilla/5.0 (compatible; MSIE 10.0; Macintosh; Intel Mac OS X 10_7_3; Trident/6.0)" 40.92.108.212 - - [24/Aug/2022:00:26:40 ] "PUT /quas/repellendus/voluptatem/rerum/quis/ut/itaque/accusamus HTTP/1.1" 302 526 "-" "Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.2 (KHTML, like Gecko) Chrome/22.0.1216.0 Safari/537.2" 40.107.168.15 - - [24/Aug/2022:00:27:18 ] "HEAD / HTTP/1.1" 500 871 "-" "Mozilla/5.0 (Windows NT 6.2; Win64; x64; rv:16.0.1) Gecko/20121011 Firefox/16.0.1" Microsoft 365 Common and Office Online ====================================== 52.109.14.150 - - [24/Aug/2022:00:07:24 ] "POST /dolore/adipisci/minus/laudantium/veritatis/et/repudiandae/qui HTTP/1.1" 302 626 "-" "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.1; Trident/4.0; GTB7.4; InfoPath.2; SV1; .NET CLR 3.3.69573; WOW64; en-US)" 52.109.57.226 - - [24/Aug/2022:00:15:05 ] "CONNECT /labore/non/eum/quasi/sapiente HTTP/1.1" 302 598 "-" "Mozilla/5.0 (compatible; MSIE 10.0; Macintosh; Intel Mac OS X 10_7_3; Trident/6.0)" 52.109.181.82 - - [24/Aug/2022:00:16:48 ] "HEAD /quis/quis/iure/vero/eaque/nisi/ad/molestiae HTTP/1.1" 302 406 "-" "Opera/9.80 (X11; Linux i686; U; ru) Presto/2.8.131 Version/11.11" 52.111.130.50 - - [24/Aug/2022:00:18:05 ] "OPTIONS /iure/recusandae/tempora/similique/sequi/culpa HTTP/1.1" 404 355 "-" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_4) AppleWebKit/537.13 (KHTML, like Gecko) Chrome/24.0.1290.1 Safari/537.13" 52.110.169.110 - - [24/Aug/2022:00:20:03 ] "POST /index.html HTTP/1.1" 500 1024 "-" "Mozilla/5.0 (iPad; CPU OS 6_0 like Mac OS X) AppleWebKit/536.26 (KHTML, like Gecko) Version/6.0 Mobile/10A5355d Safari/8536.25" 52.111.141.135 - - [24/Aug/2022:00:25:36 ] "HEAD /velit/dolores/at/aperiam/quaerat/quibusdam/enim HTTP/1.1" 200 1009 "-" "Mozilla/5.0 (X11; Ubuntu; Linux i686; rv:15.0) Gecko/20100101 Firefox/15.0.1" 52.109.246.226 - - [24/Aug/2022:00:38:21 ] "HEAD /ea/consequatur/omnis/voluptatibus/autem/earum/ut/quis/doloremque/ut/quaerat/in HTTP/1.1" 400 456 "-" "Mozilla/5.0 (iPad; CPU OS 6_0 like Mac OS X) AppleWebKit/536.26 (KHTML, like Gecko) Version/6.0 Mobile/10A5355d Safari/8536.25" 52.111.114.23 - - [24/Aug/2022:00:44:45 ] "OPTIONS /eligendi/voluptate/asperiores/in/quis/perferendis/pariatur HTTP/1.1" 202 326 "-" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_4) AppleWebKit/537.13 (KHTML, like Gecko) Chrome/24.0.1290.1 Safari/537.13"

Explanation

(More details can be found in comments in the Python script.)

- wget downloads the file, set to quiet mode with -q (meaning it does not output info other than the file), and the output -O is set to standard out with the

-character. - The Python script receives the piped output and—

- applies the jq filter to get a list of objects with the name and a list of IP address ranges

- combines the list of

dictsinto a singledict - reads the access log

- searches for and collects matches in another

dict - prints the lists

Filtering certipy output for Misconfigured Certificate Templates

This example will use the technique described for ESC1 in Certified Pre-Owned, certipy JSON output captured in a file, gron, and a Bash script for processing.

<em>#!/usr/bin/env bash

</em>

<em># printf is used to preserve newlines (unlike some implementations of echo)

# printf %s is used to preseve \\ so that output may be un-gronned

</em>

<strong>function</strong> get-enabled {

printf %s "$1" | grep -F '.Enabled = true;' | sed 's/^json\(.*\)\.Enabled = true\;/\1/;s/\(.*\)E$/\1/;s/\(.*\)\.$/\1/'

}

<em># ."Client Authentication"==true?

</em><strong>function</strong> get-requires-manager-approval {

printf %s "$1" | grep -F '["Requires Manager Approval"] = false;' | sed 's/^json\(.*\)\["Requires Manager Approval"] = false\;/\1/;s/\(.*\)E$/\1/;s/\(.*\)\.$/\1/'

}

<strong>function</strong> get-enrollee-supplies-subject {

printf %s "$1" | grep -F '["Enrollee Supplies Subject"] = true;' | sed 's/^json\(.*\)\["Enrollee Supplies Subject"] = true\;/\1/;s/\(.*\)E$/\1/;s/\(.*\)\.$/\1/'

}

<strong>function</strong> get-client-authentication {

printf %s "$1" | grep -F '["Client Authentication"] = true;' | sed 's/^json\(.*\)\["Client Authentication"] = true\;/\1/;s/\(.*\)E$/\1/;s/\(.*\)\.$/\1/'

}

<strong>function</strong> has-rights {

printf %s "$1" | grep -F "$2" | grep -F '.Permissions["Enrollment Permissions"]["Enrollment Rights"]' <strong>\

</strong> | grep -E 'Authenticated Users|Domain Computers|Domain Users' 2>&1 >/dev/null

<strong>return</strong> $?

}

GRON_FORMATTED=<strong>$(</strong>gron -m --no-sort "$1"<strong>)</strong>

INTERESTING_TEMPLATES=<strong>$(</strong>

comm -12 <(

get-enabled "<strong>${</strong>GRON_FORMATTED<strong>}</strong>"<strong>)</strong> <(

comm -12 <(

get-requires-manager-approval "<strong>${</strong>GRON_FORMATTED<strong>}</strong>") <(

comm -12 <(

get-enrollee-supplies-subject "<strong>${</strong>GRON_FORMATTED<strong>}</strong>") <(

get-client-authentication "<strong>${</strong>GRON_FORMATTED<strong>}</strong>"

)))

)

RESULT=""

<strong>while</strong> IFS= read -r x

<strong>do</strong>

<strong>if</strong> has-rights "<strong>${</strong>GRON_FORMATTED<strong>}</strong>" "<strong>${</strong>x<strong>}</strong>"

<strong>then</strong>

RESULT="${</strong>RESULT<strong>}</strong><strong>$(</strong>printf %s "<strong>${</strong>GRON_FORMATTED<strong>}</strong>" | grep -F "<strong>${</strong>x<strong>}</strong>"<strong>)</strong>"

<strong>fi</strong>

<strong>done</strong> <<< $INTERESTING_TEMPLATES

printf %s "<strong>${</strong>RESULT<strong>}</strong>" | gron --no-sort --ungron</strong><strong>$ </strong>./esc1_filter_from_certipy.sh 20220802122105_Certipy4_SecLab.json

{

"Certificate Templates": {

"0": {

"Any Purpose": false,

"Authorized Signatures Required": 0,

"Certificate Name Flag": [

"SubjectAltRequireDomainDns",

"EnrolleeSuppliesSubject"

],

"Client Authentication": true,

"Display Name": "ESC1(4096)",

"Enabled": true,

"Enrollee Supplies Subject": true,

"Enrollment Agent": false,

"Enrollment Flag": [

"PublishToDs"

],

"Extended Key Usage": [

"Client Authentication",

"Server Authentication",

"Smart Card Logon",

"KDC Authentication"

],

"Permissions": {

"Enrollment Permissions": {

"Enrollment Rights": [

"SECLAB.TEST.LOCAL\\Authenticated Users"

]

},

"Object Control Permissions": {

"Owner": "SECLAB.TEST.LOCAL\\Admin Mario",

"Write Dacl Principals": [

"SECLAB.TEST.LOCAL\\Admin Mario"

],

"Write Owner Principals": [

"SECLAB.TEST.LOCAL\\Admin Mario"

],

"Write Property Principals": [

"SECLAB.TEST.LOCAL\\Admin Mario"

]

}

},

"Private Key Flag": [

"16777216",

"65536"

],

"Renewal Period": "6 weeks",

"Requires Key Archival": false,

"Requires Manager Approval": false,

"Template Name": "ESC1(4096)",

"Validity Period": "1 year",

"[!] Vulnerabilities": {

"ESC1": "'SECLAB.TEST.LOCAL\\\\Authenticated Users' can enroll, enrollee supplies subject and template allows client authentication"

}

},

"3": {

"Any Purpose": false,

"Authorized Signatures Required": 0,

"Certificate Name Flag": [

"SubjectAltRequireDomainDns",

"EnrolleeSuppliesSubject"

],

"Client Authentication": true,

"Display Name": "ESC1",

"Enabled": true,

"Enrollee Supplies Subject": true,

"Enrollment Agent": false,

"Enrollment Flag": [

"PublishToDs"

],

"Extended Key Usage": [

"KDC Authentication",

"Smart Card Logon",

"Server Authentication",

"Client Authentication"

],

"Permissions": {

"Enrollment Permissions": {

"Enrollment Rights": [

"SECLAB.TEST.LOCAL\\Authenticated Users"

]

},

"Object Control Permissions": {

"Owner": "SECLAB.TEST.LOCAL\\Admin Mario",

"Write Dacl Principals": [

"SECLAB.TEST.LOCAL\\Admin Mario"

],

"Write Owner Principals": [

"SECLAB.TEST.LOCAL\\Admin Mario"

],

"Write Property Principals": [

"SECLAB.TEST.LOCAL\\Admin Mario"

]

}

},

"Private Key Flag": [

"16777216",

"65536"

],

"Renewal Period": "6 weeks",

"Requires Key Archival": false,

"Requires Manager Approval": false,

"Template Name": "ESC1",

"Validity Period": "1 year",

"[!] Vulnerabilities": {

"ESC1": "'SECLAB.TEST.LOCAL\\\\Authenticated Users' can enroll, enrollee supplies subject and template allows client authentication"

}

}

}

}Explanation

gron is used to turn the JSON-formatted data into a line-delimited format, which is stored in a variable.That variable is run through a series of Bash functions which output template identifiers that match said criteria.The template identifiers are then compared with comm to filter down to the ones that meet all of the criteria.The identified templates are then checked against a single, final criterion.If the templates match, then the result is appended to a variable.gron is used to turn the value final result variable back into JSON.Note: This code should not be considered a replacement for a good understanding of ESC1. It is an example of how to use gron to then leverage other command line applications in order to facilitate handling data in JSON format.

Deserialize complex data sets such as MITRE ATT&CK enterprise stix data

This example will use the MITRE ATT&CK stix data, the dataclasses-json Python package, and a Python script. It will process the large data set and, in this case, filter it for all the names of identified threat actors documented within the MITRE ATT&CK stix data.

<em>#!/usr/bin/env python3</em>

<strong>import</strong> <strong>json</strong>

<strong>from</strong> <strong>typing</strong> <strong>import</strong> Set

<strong>from</strong> <strong>typing</strong> <strong>import</strong> Dict

<strong>from</strong> <strong>typing</strong> <strong>import</strong> List

<strong>from</strong> <strong>typing</strong> <strong>import</strong> Optional

<strong>from</strong> <strong>pathlib</strong> <strong>import</strong> Path

<strong>from</strong> <strong>functools</strong> <strong>import</strong> reduce

<strong>from</strong> <strong>dataclasses</strong> <strong>import</strong> field

<strong>from</strong> <strong>dataclasses</strong> <strong>import</strong> dataclass

<strong>from</strong> <strong>dataclasses_json</strong> <strong>import</strong> Undefined

<strong>from</strong> <strong>dataclasses_json</strong> <strong>import</strong> config

<strong>from</strong> <strong>dataclasses_json</strong> <strong>import</strong> dataclass_json

@dataclass_json(undefined=Undefined.EXCLUDE)

@dataclass(frozen=<strong>True</strong>)

<strong>class</strong> <strong>MitreAttackObject</strong>:

type :str

stix_id :str = field(metadata=config(field_name='id'))

name :Optional[str] = <strong>None</strong>

@dataclass_json(undefined=Undefined.EXCLUDE)

@dataclass(frozen=<strong>True</strong>)

<strong>class</strong> <strong>MitreAttackIntrusionSet</strong>(MitreAttackObject):

aliases :List[str] = field(default_factory=list)

deprecated :bool = field(default=<strong>False</strong>, metadata=config(field_name='x_mitre_deprecated'))

<strong>def</strong> mitre_attack_object_from_dict(d :Dict) -> MitreAttackObject:

object_type = d.get('type')

<strong>if</strong> ('intrusion-set' == object_type):

<strong>return</strong> MitreAttackIntrusionSet.from_dict(d)

<strong>else</strong>:

<strong>return</strong> MitreAttackObject.from_dict(d)

<strong>def</strong> collect_types(collection, object_):

collection.update({object_.type: 1 + collection.get(object_.type, 0)})

<strong>return</strong> collection

<strong>def</strong> type_is_intrusion_set(dataclass_object :MitreAttackObject) -> bool:

<strong>return</strong> ('intrusion-set' == dataclass_object.type)

<strong>def</strong> collect_names(collection :Set[str], dataclass_object :MitreAttackObject) -> Set[str]:

collection.add(dataclass_object.name)

collection.update(dataclass_object.aliases)

<strong>return</strong> collection

intruder_names = set()

json_fullname = (Path(__file__).parent / 'enterprise-attack-11.3.json').resolve()

<strong>with</strong> json_fullname.open(encoding='utf-8') <strong>as</strong> handle:

objects = list(map(mitre_attack_object_from_dict, json.load(handle)['objects']))

object_types = reduce(collect_types, objects, dict())

print(f'file contains <strong>{</strong>object_types.get("intrusion-set")<strong>:</strong>,d<strong>}</strong> intrusion-sets')

print(

*list(sorted(

reduce(

collect_names,

filter(type_is_intrusion_set, objects),

set()

),

key=str.casefold

)),

sep='<strong>\n</strong>',

end='<strong>\n\n</strong>'

)Explanation

The large file containing JSON-formatted data is read into memory and parsed with Python's built-in json module.A function is used to map the objects within the data set into dataclasses.The dataclasses are used to create adict with the names of the types and the number of types.The number of intrusion sets (obtained from the dict above) is written to standard out.A list of the names is printed from sorting (without case sensitivity), reduced from the full list of dataclass objects and filtered by their type.

Look for access time modification in CrackMapExec spider (plus) output over time

This example will use a couple of files of CrackMapExec spider (plus) JSON-formatted output and jd. With jd, we can quickly isolate and view the differences between the two scans:

<strong>$ </strong>jd cme_output_20220801.json cme_output_20220802.json @ ["Share1","HelpDesk/Passwords.txt","mtime_epoch"] - "2022-08-01 20:19:11" + "2022-08-02 08:32:12" @ ["Share1","HelpDesk/Passwords.txt","size"] - "1.27 KB" + "1.28 KB"

Closing Thoughts

Working with JSON-formatted data need not be a chore. There are many great tools to assist with processing and utilizing it in numerous ways. Above, I have attempted to present my favorite tools for working with JSON-formatted data as well as some practical examples to better illustrate their uses. As evidenced, JSON-formatted data is already prevalent on the web and is making its way as a standardized output format for many other tools. I hope some of the examples above prompt you to find new ways to work with JSON-formatted data and to embrace it as the useful container that it can be.

Thanks

This blog would not have been possible without the following people:

Adam Compton @tatanusJulie DaymutJustin Bollinger @bandrelLarry Spohn @Spoonman1091Lou Scicchitano @LouScicchitanoMike Spitzer